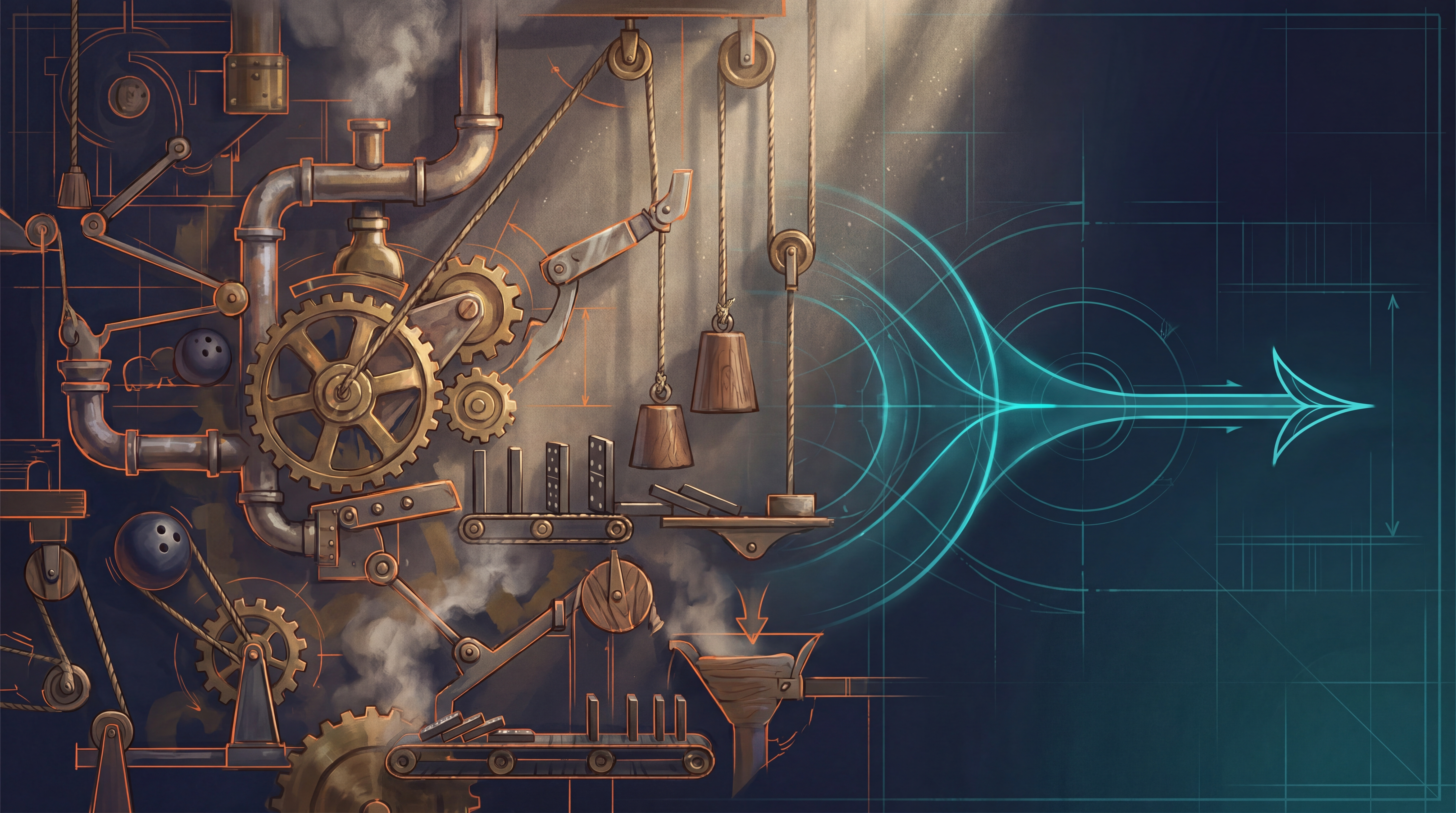

Don't Automate the Rube Goldberg Machine

For three years I tried to implement a CI/CD strategy at work and it kept falling on its face. Multiple internal teams. Multiple consultancies. Multiple plans. Every attempt collapsed under its own weight. There was always too much to automate.

I blamed tooling. I blamed scope. I blamed staffing. The actual problem was that I was trying to automate a Rube Goldberg machine — and AI is what finally helped me see it.

This post is about that moment, and how it fits into a broader shift in how I work with AI: from chatbot, to CLI agent, to multiple parallel agentic sessions orchestrating MCPs and command-line tools on my behalf. It has been the single biggest force multiplier I’ve experienced in two decades of doing this work.

The personal force multiplier came first

Before any of the work breakthroughs, AI started paying for itself in my personal life.

In the past year I’ve used AI to surface thousands of dollars in tax refunds I would not have caught — credits, deductions, and reconciliation errors that a paid preparer missed. More recently, while planning a crawlspace cleanup and insulation upgrade ahead of a heat pump install, AI flagged that Puget Sound Energy (PSE) offers rebates for that exact combination of work. I passed the details to my contractor, and we’re on track for roughly $4,500 in rebates I would have otherwise left on the table.

I’ve used it to plan vacations end-to-end. I’ve used it to draft, edit, and pressure-test communications I would have spent a Saturday agonizing over. I’ve even handed it my health data and let it track my sodium intake from text messages.

None of this is exotic. The pattern is what matters: when you load enough context into a capable model and ask better questions, it returns money, time, and judgment back to you.

The evolution: chatbot → CLI → orchestrated parallel sessions

My usage curve has moved through four phases:

-

Chatbot. Single-turn questions and answers in a browser. Useful, but hit a ceiling fast. The model couldn’t see my files, my repos, or my real context.

-

Claude Code (CLI agent). Putting an agent on my actual filesystem, with read/write access to my codebase, was a step-change. Not “tell me how to do this” — “do this, here’s the project.” I wrote about what that looked like day-to-day once it became my default working surface.

-

OpenClaw — always-on, multi-channel. OpenClaw is an open-source personal AI assistant that runs locally and answers from the channels you already use — WhatsApp, Telegram, Slack, iMessage, Discord. It has persistent memory, a skills system, and the ability to run shell commands, browse the web, and operate your machine. I ran it as a 24/7 personal assistant — a “Jarvis” sitting on top of my own data and tools. A month in, it was actually doing real work: genuinely useful for off-keyboard tasks like dictating a request from the car, getting the answer back as a message, or kicking off cron-driven background jobs. The operating model — agent on your hardware, your skills, your context, your control plane — is the right one.

-

Back to Claude Code, parallel sessions, MCP-mediated. For deep engineering work I came back to Claude Code, but with a different shape than where I started. I’d already run both side by side on Telegram, and eventually Claude Code subsumed enough of OpenClaw’s job that I stopped reaching for it. Now multiple sessions run simultaneously, each with a distinct role, talking to MCP servers and CLIs on my machine. One session owns the website. Another owns DevOps work. Another reads its own knowledge base in Obsidian and drafts internal updates. They don’t collide because each operates in a scoped working directory with its own context. I orchestrate the sequence. OpenClaw still has a place for ambient, message-driven tasks — but for high-bandwidth keyboard-and-screen work, parallel Claude Code sessions are where the leverage is.

This is not a parlor trick. It is a real shift in operating leverage. A single human, with a coherent set of agents and the right protocols between them, can carry the load of a small team — provided the human stays disciplined about what to delegate and what to keep.

Three years of failed CI/CD — what AI helped me see

Back to work. The CI/CD problem looked technical. I treated it as a tooling and pipeline gap. We needed better GitHub Actions, better deployment scripts, better QA gates, better integrations with our test platforms. Every attempt got bogged down in the sheer surface area of what we were trying to automate.

I sat down with Claude — armed with our actual workflow documentation, our Jira configuration, our deployment history — and asked it to help me understand why every plan kept collapsing.

The answer was uncomfortable: we didn’t have a CI/CD problem. We had a workflow problem.

Our existing process had 20 status transitions, 11 handoffs, 7 sequential approval gates, and 4 redundant QA phases. A ticket took 9 to 30 days from idea to production on the happy path. Most of that time wasn’t writing code. It was waiting, re-explaining context, duplicating information across three tools, and manually moving tickets between statuses that didn’t reflect anything observable.

Stripped to first principles, software delivery has five steps that actually create value:

- Someone identifies a need.

- Someone decides it’s worth doing.

- Someone writes the code.

- A machine verifies the code works.

- The code goes live.

Five steps. Our process had twenty. The fifteen-step delta was muda — the Lean term for waste. Inventory. Over-processing. Waiting. Duplication.

Why would I want to automate that? Automating waste doesn’t eliminate it. It just makes it run faster while preserving every dysfunction inside it. Innovation breaks things — and any culture that has accreted twenty status transitions has long since stopped letting it.

This is the Rube Goldberg insight: take ten steps back from the contraption, and you realize the elaborate sequence of pulleys and levers produces nothing of value. Automating it doesn’t help. The right move is to rip it out and rebuild around what actually creates value.

The DevOps Handbook gave me the language

Once I had the insight, I needed a vocabulary that would let me defend it to leadership and the rest of the engineering org. I went back to the source material — Gene Kim, Jez Humble, Patrick Debois, and John Willis’s The DevOps Handbook — and the picture sharpened.

The Three Ways aren’t slogans. They are operational principles:

- First Way — Flow: accelerate work left-to-right, in small batches, by eliminating waste and elevating the constraint.

- Second Way — Feedback: amplify signals right-to-left through telemetry, DORA metrics, SLOs, and the ability for anyone to halt the line.

- Third Way — Continual Learning: blameless postmortems, GameDays, kaizen, and converting local insights into global improvements.

What I’d been calling a “force-multiplier redesign” was Theory of Constraints. What I’d been calling a “muda elimination strategy” was Value Stream Mapping. What I was about to build was a deployment pipeline in the canonical Continuous Delivery sense.

Naming the work in shared language did two things: it made the plan defensible to anyone DevOps-literate, and it told me where my plan was thin. My original scope was strong on Flow and weak on Feedback and Learning. So I added the missing pieces: DORA instrumentation, SLOs and error budgets, a postmortem ritual, quarterly GameDays, a kaizen rhythm, and a formal Value Stream Map.

Eliminate before you automate

The hardest decision was killing Jira. Not migrating it, not reconfiguring it — eliminating it entirely.

Jira had become a no-value system for my team. Marketing already worked in Asana. Internal stakeholders already worked in ServiceNow. Engineers already lived in GitHub. Jira existed as a fourth tool whose only job was to mirror the other three poorly, with manual status transitions that nobody trusted and that never matched the actual state of the code.

Three sources of truth, plus a fourth that everyone had to update by hand, is not project management. It is information duplication tax.

The redesign:

- Intake stays where stakeholders already are — Asana for marketing requests, ServiceNow for IT and LPM-aligned work.

- Engineering work lives in GitHub Issues, in the same place as the code. One backlog. One project board.

- Status is derived from PR state, not manually transitioned. If a branch exists, the issue is in progress. If a PR is open, it’s in review. If the PR is merged, the deployment pipeline owns the rest.

- Deployment is a self-verifying pipeline: PR merged → DEV → TEST → automated QA → LIVE. No release queue. No bi-weekly batch. No stakeholder sign-off bottleneck. Stakeholders validate in production with the safety net of monitoring and rollback.

Twenty statuses become five meaningful states. Eleven handoffs become three. Seven approval gates become one — the PR code review, where it belongs.

This is what “eliminate before you automate” looks like in practice. The amount of automation work shrinks once you stop trying to automate the dysfunction.

And then GitHub Agentic Workflows showed up

Just as I was rebuilding around GitHub Actions, GitHub quietly released something that’s been out for several months and is, frankly, embarrassing that I almost missed it: GitHub Agentic Workflows (gh aw).

The pitch is simple and the implications are not. You write your automation as a Markdown file with YAML frontmatter and a natural-language prompt. You run gh aw compile and it generates a sandboxed, SHA-pinned .lock.yml GitHub Actions workflow. The workflow runs on a schedule or on-demand, calls the GitHub MCP server to read your repos and projects, and writes structured outputs — issues, comments, PRs, labels — through a safe-output layer that limits what the agent can actually do.

Translation: I can now embed AI decision-making directly into our CI surface, with the same reliability and audit trail as a normal GitHub Action. (If you’ve seen my Bluesky-posting GitHub Action, it’s the same pattern, just with an agent in the driver’s seat instead of a fixed script.)

A handful of patterns I have queued up:

- Daily standup report. Cron-triggered. Reads the Project board, lists per-developer in-progress items, weekly commitments, and blockers. Replaces the manual daily summary I used to write.

- PR triage. Auto-labels PRs, assigns reviewers, validates that the issue link and acceptance criteria are present.

- Issue triage. Classifies new issues, suggests an assignee, applies labels.

- Release notes. Generates release notes from merged PRs since the last tag.

- KPI report. Weekly markdown roll-up of cycle time, throughput, and DORA metrics from issue and PR history.

- Dependency review. Summarizes Dependabot PRs and flags breaking changes for human review.

The first one — the standup report — is already running. The rest are templates away. And the same agent files (just .md files in .github/workflows/) are versionable, reviewable, and auditable like any other code in the repo.

What this unlocks

Stack the layers and the picture is different from where I was a year ago.

- I have agents on my own machine that file taxes more accurately than I do, plan vacations, draft business communications, and refactor code in parallel.

- I have a workflow at work where AI helped me see the actual problem — that I was automating waste — and gave me the language to defend the redesign.

- I have a CI surface where AI is now a participating workflow node, not a separate tool I have to remember to consult.

The pattern is consistent: AI is most valuable not when it answers questions, but when it operates inside the systems I already use, with enough context to make decisions and enough constraints to be trustworthy.

If you’re trying to automate something at your job and it keeps falling apart, the question worth asking before you write another line of YAML is: am I about to automate a Rube Goldberg machine? If the answer is yes, the next move isn’t a better automation tool. The next move is a pair of metaphorical bolt-cutters.

Eliminate before you automate. Then let the agents do their job.

Related Reading

- It’s Not What You Can Do — It’s What You Can Get Done — Why delegation is the underlying skill that makes any of this work

- The Cost of Every Yes — The strategic frame for choosing what not to automate

- I Don’t Need OpenClaw Anymore — How my own toolchain went through the same eliminate-before-automate cycle

- Innovation Breaks Things. Culture Eats Strategy. — Why culture, not tooling, is what stops most automation efforts

- Why AI Is Different: Every Prior Tool Took Sides — The bigger arc this all sits inside

Resources

- GitHub Agentic Workflows (

gh aw) — github.com/github/gh-aw - Creating workflows — github.github.com/gh-aw/setup/creating-workflows

- Session slides (April 2026) — github.github.com/gh-aw/slides/20260407-github-agentic-workflows.pdf

- OpenClaw — openclaw.ai · github.com/openclaw/openclaw

- The DevOps Handbook — Gene Kim, Jez Humble, Patrick Debois, John Willis

- The Goal and the Theory of Constraints — Eliyahu M. Goldratt

About the Author

Kevin P. Davison has over 20 years of experience building websites and figuring out how to make large-scale web projects actually work. He writes about technology, AI, leadership lessons learned the hard way, and whatever else catches his attention—travel stories, weekend adventures in the Pacific Northwest like snorkeling in Puget Sound, or the occasional rabbit hole he couldn't resist.